NeuralUSD

Goal

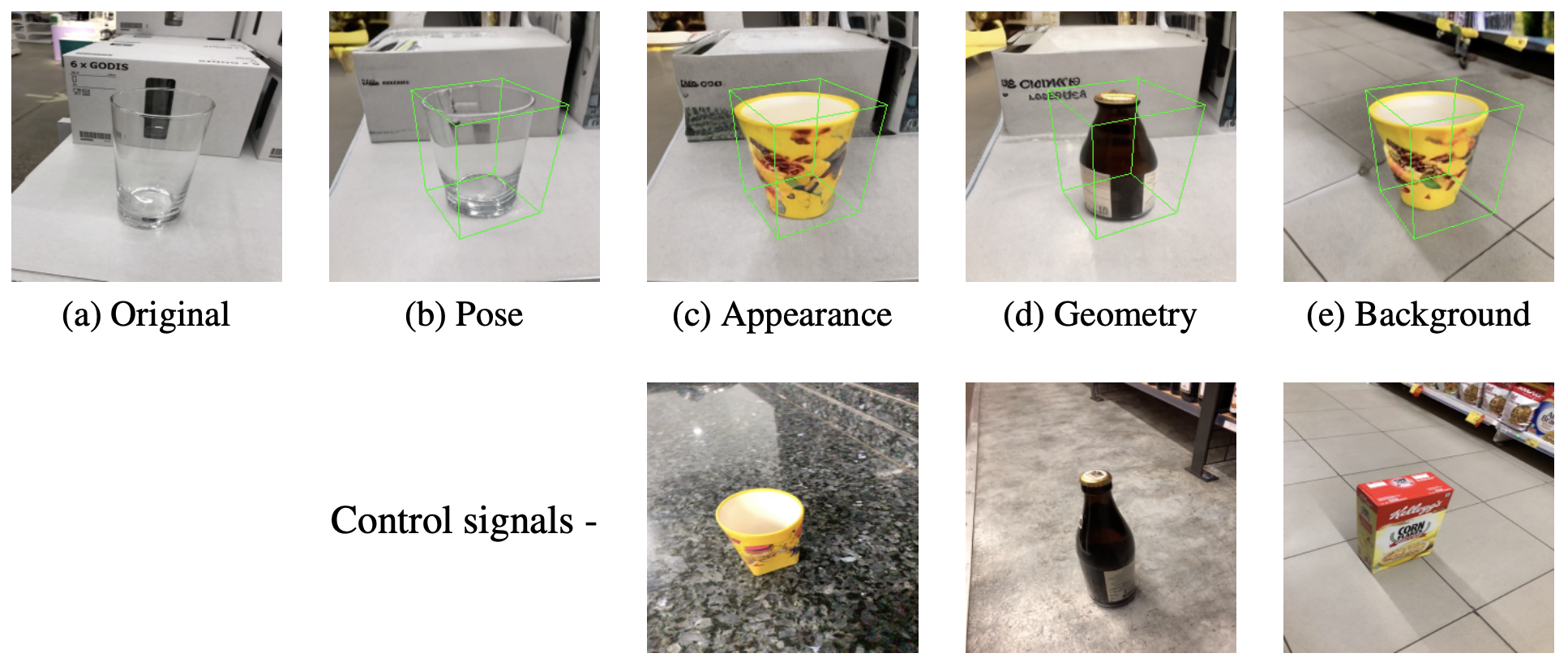

Goal of the paper is the get precise control over objects in scenes. The authors present Neural Universal Scene Descriptor (USD), a XML-like format for each object, which encodes per object appearance, geometry and pose information about it. They formulate the task as conditional generation (Stable Diffusion V2.1), synthesizing an image from a USD.

Method

The USD mainly contains the following information Appearance: DinoV2 Geometry: Depth (could also use other information) Poses: 2D/3D bounding boxes -> MLP

Other information like CLIP features, Normals, PointClouds etc. can be integrated in the future.

Everything is then concatenated and projected to the correct token size using MLP.

Supervising the diffusion process with individual images leads to entanglement of conditioning signals: model uses only appearance and geometry, disregards pose encodings.

Therefore they train on pairs of images (same scene, different viewpoints):

Src Image -> extract USD appearance and geometry features

Tgt Image -> extract USD object pose (2D/3D)

USD -> predict Tgt Image

Essentially you extract the appearance and geometry from source, and then you are supposed to render it form a different view (target).

To further disentangle the representation, they use modaility dropout similar to CFG (just zero out some of the information).

Results:

Thoughts:

- small quantiative eval. would be interested to see the disentanglemet - how can you measure that?

- not very detailed. what are the pose embeddings, how are they used? No code.